Recovery of Human Body Scans

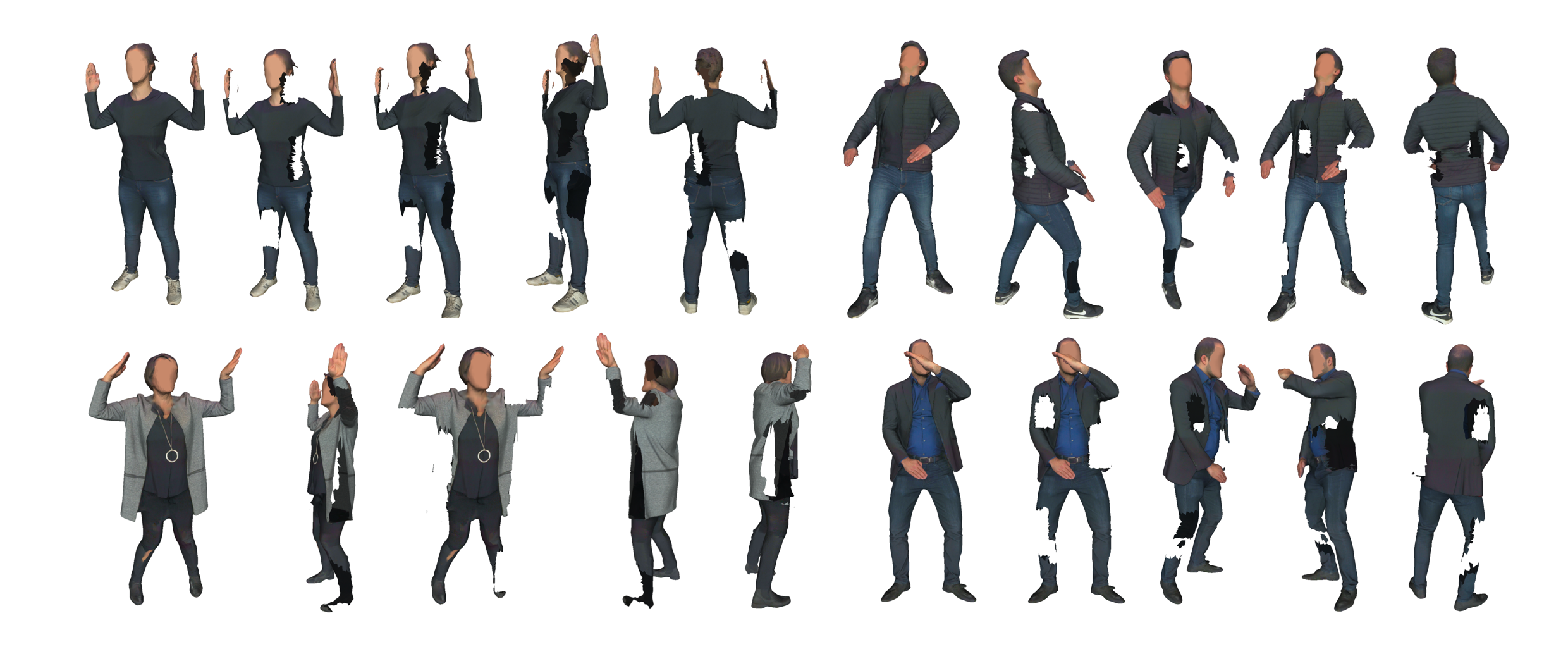

The task of this challenge is to accurately reconstruct a full 3D textured mesh from a partial 3D human scan acquisition acquired with the Shapify booth of Artec3D, similar in its quality to the 3DBodyTex dataset presented at 3DV’18 but collected following a different protocol. We refer to this new dataset 3DBodyTex.v2. 3DBodyTex.v2 is a new original dataset that will be shared with the research community after signing a data license agreement. It consists of about 2500 clothed scans with a large diversity in clothing and in poses. This covers 500 different subjects in up to 6 poses each, as well as an extended version of the 3DBodyTex data consisting of over 800 scans of 230 people in tight-fitting clothing, with up to 3 poses per subject.

The training data consists of pairs, (X, Y), of partial and complete scans, respectively. The goal is to recover Y from X. As part of the challenge, we share routines to generate partial scans X from the given complete scans Y.

However, the participants are free to devise their own way of generating partial data, as an augmentation or a complete alternative.

- Any custom procedure should be reported with description and implementation among the deliverable.

- A quality-check is performed to guarantee a reasonable level of defects.

- The partial scans are generated synthetically.

- For privacy reasons, all meshes are anonymized by blurring the shape and texture of the faces, similarly to the 3DBodyTex data.

- During evaluation, the face and hands are ignored because the shape from raw scans is less reliable.

More information can be found here: https://gitlab.uni.lu/cvi2/cvpr2021-sharp-workshop/