SPARK Challenge : SPAcecraft Recognition leveraging Knowledge of Space Environment

organized as part of the AI4Space workshop, in conjunction with

Acquiring information and knowledge about objects orbiting around earth is known as Space Situational Awareness (SSA). SSA has become an important research topic thanks to multiple large initiatives, e.g., from the European Space Agency (ESA), and from the American National Aeronautics and Space Administration (NASA).

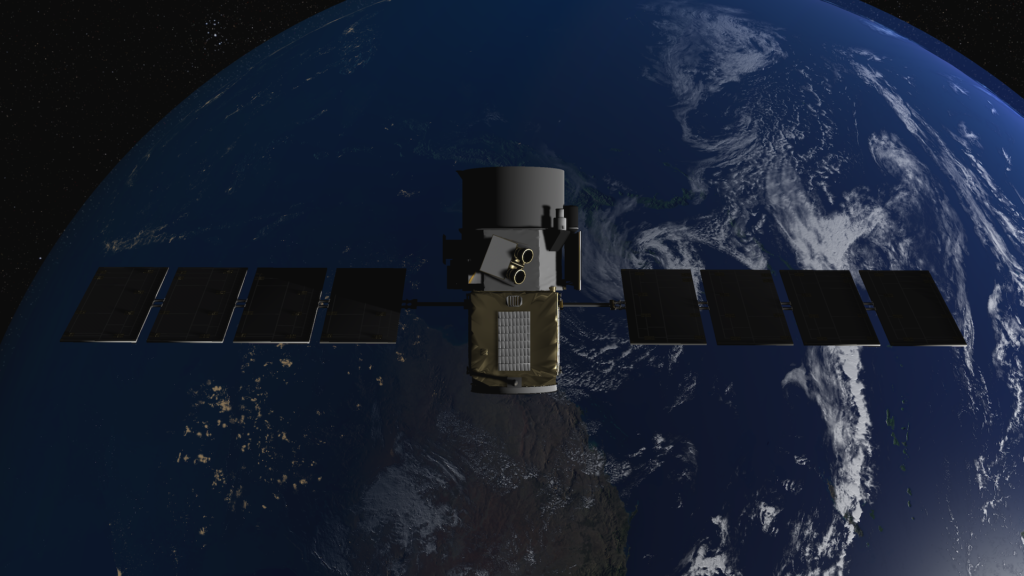

Vision-based sensors are a great source of information for SSA, especially useful for spacecraft navigation and rendezvous operations where two satellites are to meet at the same orbit and perform close-proximity operations, e.g., docking, in-space refuelling, and satellite servicing. Moreover, vision-based target recognition is an important component of SSA and a crucial step towards reaching autonomy in space. However, although major advances have been made in image-based object recognition in general, very little has been tested or designed for the space environment.

State-of-art object perception algorithms are deep learning approaches requiring large datasets for training. The lack of sufficient labelled space data has limited the efforts of the research community in developing data-driven space object perception approaches. Indeed, in contrast to terrestrial applications, the quality of spaceborne imaging is highly dependent on many specific factors such as varying illumination conditions, low signal-to-noise ratio, and high contrast.

SPARK 2024 Challenge aims to design data-driven approaches for spacecraft semantic segmentation and trajectory estimation. SPARK will utilize data synthetically simulated with a state-of-the-art rendering engine in addition to data collected from the Zero-Gravity Laboratory (Zero-G Lab) facility, at SnT – Interdisciplinary Center for Security, Reliability and Trust, University of Luxembourg.

Competition Streams

This year’s competition will include two streams.

Stream 1 – Spacecraft Semantic Segmentation

Stream 2 – Spacecraft Trajectory Estimation

Dataset

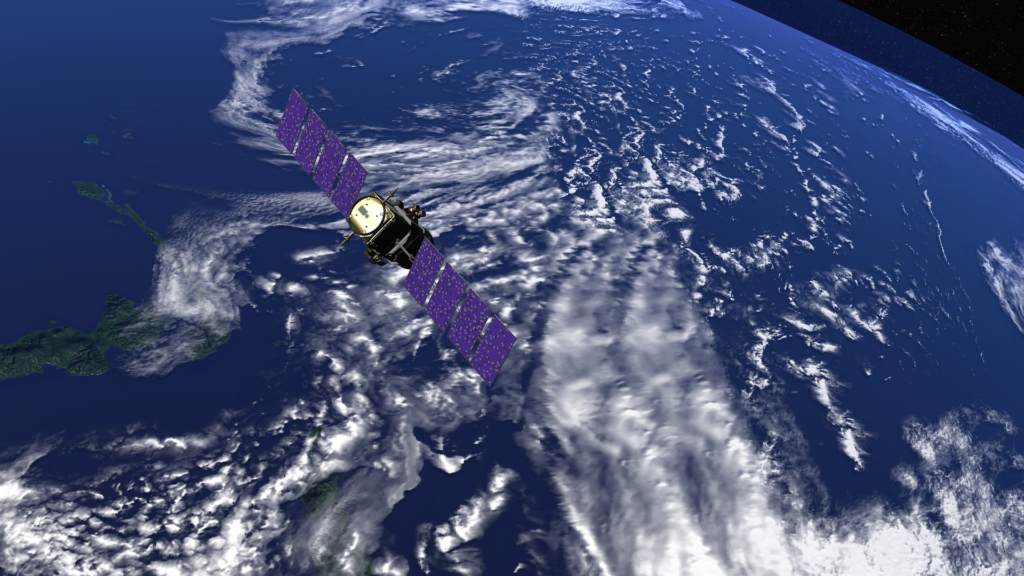

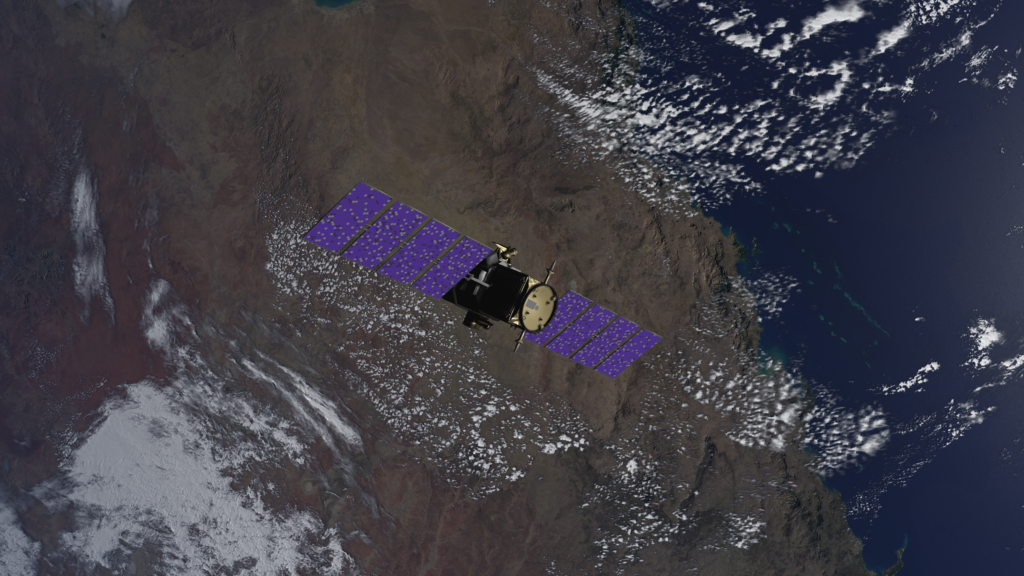

The SPARK simulator is a unique and new tool based on the Unity3D game engine.

The simulation environment models:

This model represents the observer that is equipped with different vision systems.

A pinhole camera model was used with known intrinsic camera parameters and optical sensor specifications.

In addition to simulated data, the dataset will also contain real images acquired in a cutting-edge space simulating facility.