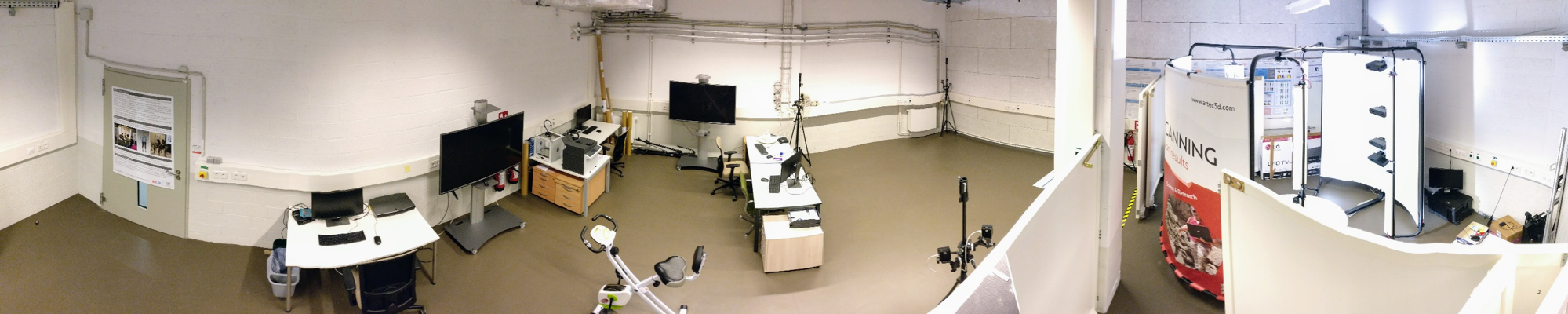

The SnT Computer Vision Lab is affiliated with the Computer Vision, Imaging and Machine Intelligence (CVI²) Research Group led by Dr. Djamila Aouada. The lab provides state-of-the-art facilities for controlled measurements, testing, and validation, essential to support experimentally driven research in computer vision and imaging. The activities of the lab are focused on 3D sensing and analysis with applications ranging from security and safety to assistive computer vision for healthcare. The lab supports research on 3D data enhancement, 2D/3D shape modelling, 3D motion analysis, face modelling and recognition. Dedicated techniques are developed for matching, filtering, classification, learning, recognition, detection and estimation with an extensive use and development of deep learning approaches. Since 2017, the laboratory is located in Maison du Nombre (MNO), Campus Belval.

Laboratory

Past and ongoing research and development activities in the SnT CVI² Research Group are:

2D/3D Shape modeling – The focus is on modeling non-rigid deformations of shapes in 2D and in 3D accounting for both their topological and geometric properties.

Activity recognition – The interest is to develop activity recognition methods that are robust to real-world conditions, where there is a constant change in illumination, texture, occlusions and viewpoint. Our focus is on using RGB-D cameras, from which 3D information can be coupled with colour information, thus reducing sensitivity to illuminations and textures.

3D face recognition – Different phases of face recognition are considered; starting from 3D face reconstruction, feature extraction to classification. Face dynamics are also explored in the context of expression/emotion recognition.

Multi-modal data fusion – Both fusion of different image modalities and fusion at different levels of abstraction are considered. Examples include fusion of 3D data captured with a low resolution depth sensor with 2D images of a high resolution camera, and multi-view fusion of RGB-D data.

Depth data denoising and super-resolution – Enhancement of depth videos by developing denoising and super-resolution algorithms that take advantage of temporal information contained in the row videos. Special attention is given to videos containing dynamically deforming objects.

RGB-D multi-view calibration and 3D reconstruction – In order to deal with occlusions, multiple RGB-D cameras at different viewpoints can be fused. To that end, the relative pose of each camera needs to be estimated while taking advantage of all the available data as captured by RGB-D cameras.